As the Paris 2024 Olympic Games continues, then #WOW continues with another Olympic themed challenge, this time set by Kyle.

Defining the core calculations

There’s a lot of LoD calcs used in this, so let’s set this up to start with.

Avg Age Per Sport

INT({FIXED [Sport]: AVG([Age])})

Avg Age Per Sport – Gold

INT({FIXED [Sport]: AVG(IF [Medal]=’Gold’ THEN [Age] END)})

Avg Age Per Sport – Silver

INT({FIXED [Sport]: AVG(IF [Medal]=’Silver’ THEN [Age] END)})

Avg Age Per Sport – Bronze

INT({FIXED [Sport]: AVG(IF [Medal]=’Bronze’ THEN [Age] END)})

Oldest Age Per Sport

INT({FIXED [Sport]: MAX([Age])})

Youngest Age Per Sport

INT({FIXED [Sport]: MIN([Age])})

Format all these fields to use Number Standard format so just whole numbers are displayed.

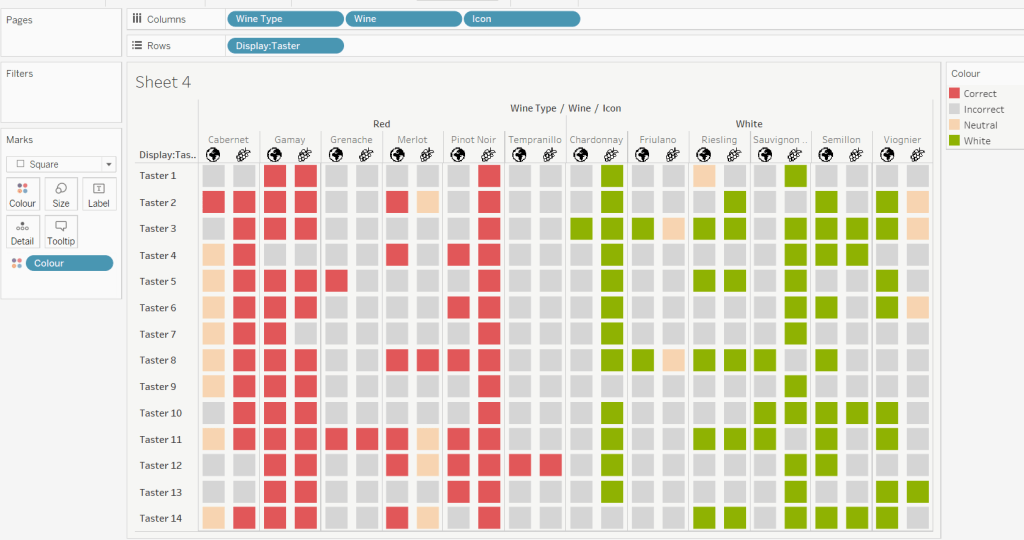

Let’s put all these out in a table – add Sport to Rows and then add all these measures

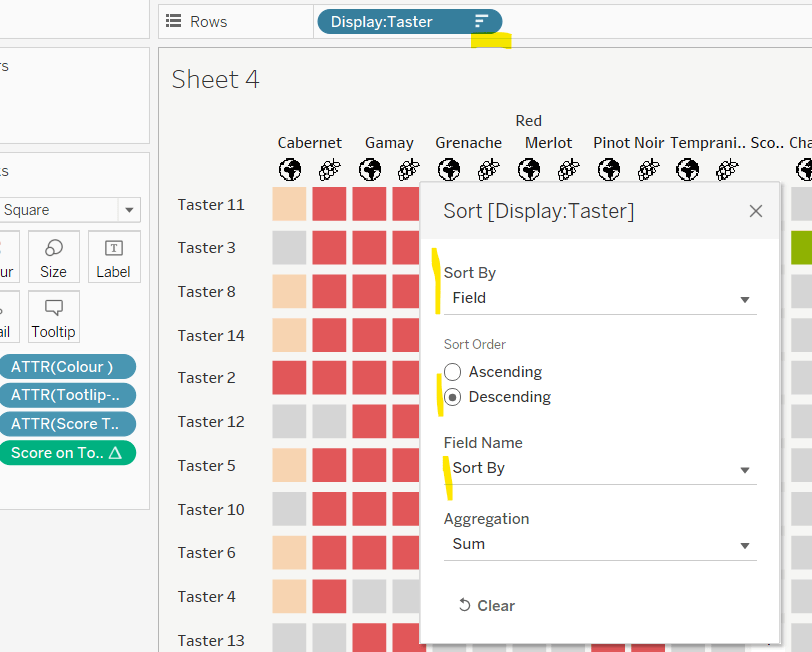

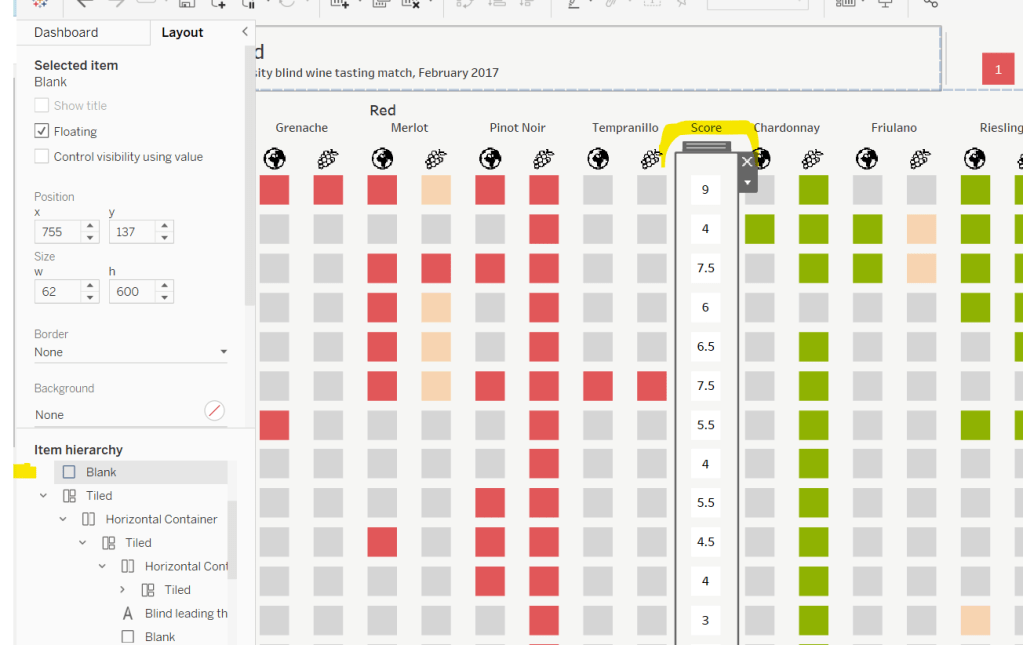

Kyle hinted that while the display looks like a trellis chart, it’s been built with multiple sheets – 1 per row. So we need to identify which row each set of sports is associated to, given there are 5 sports per row.

Row

INT((INDEX()-1)/5)

Make this discrete, and add this to Rows, and then adjust the table calculation so that it is explicitly computing by Sport, and we can see how each set of sports is ‘grouped’ on the same row.

Building the core viz

The viz is essentially a stacked bar chart of number of medallists by Age. But the bars are segmented by each individual medallist, and uniquely identified based on Name, Medal, Sport, Event, Year.

Add Sport to Columns, Age to Columns (as a continuous dimension – green pill) and Summer Olympic Medallists (Count) to Rows.

Change the mark type to Bar and reduce the Size a bit. Add Year, Event and Name to the Detail shelf, then add Medal to the Colour shelf and adjust accordingly. Re-order the colour legend, so it’s listed Bronze, Silver , Gold.

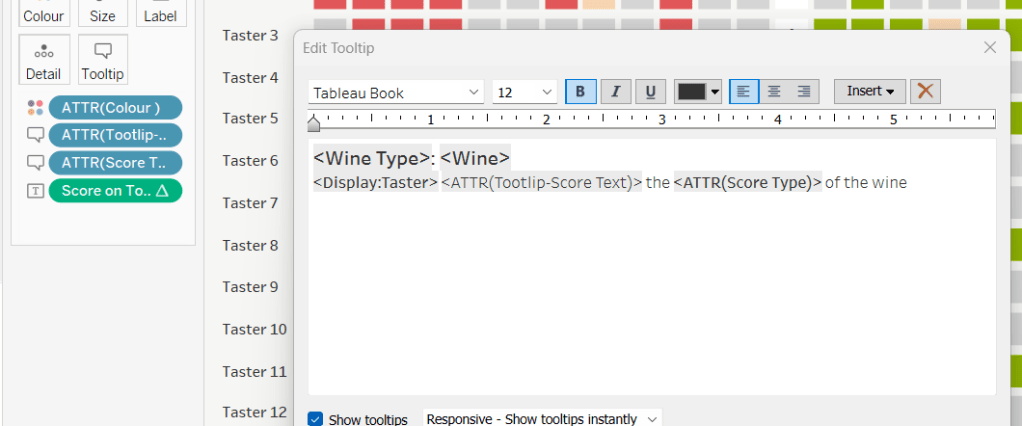

For the Tooltip we need to colour the type of medal, so need to create

Tooltip: Bronze

UPPER(IF [Medal] = ‘Bronze’ THEN [Medal] END)

Tooltip: Silver

UPPER(IF [Medal] = ‘Silver’ THEN [Medal] END)

Tooltip: Gold

UPPER(IF [Medal] = ‘Gold’ THEN [Medal] END)

Add all these to the Tooltip shelf, then adjust the Tooltip accordingly, referencing the 3 fields above and colouring the text.

The summary information for each sport displayed above each bar chart, is simply utilising the ‘header’ section of the display. We need to craft dedicated fields to display the text we need (note the spaces)

Heading – Medal Age

“Avg Medalling Age: ” + STR([Avg Age Per Sport])

Heading: Age per Medal

“Gold: ” + STR(IFNULL([Avg Age Per Sport – Gold],0)) + ” Silver: ” + STR(IFNULL([Avg Age Per Sport – Silver],0)) + ” Bronze: ” + STR(IFNULL([Avg Age Per Sport – Bronze],0))

Heading: Young | Old

“Youngest: ” + STR([Youngest Age Per Sport]) + ” Oldest: ” + STR([Oldest Age Per Sport])

Add each of these to Rows in the relevant order. Widen each column and reduce the height of each header row if need be

Adjust the font style of all the 4 fields in the header. I used

- Sport – Tableau Medium 14pt, black, bold

- Heading – Medal Age – Tableau Book 12pt, black, bold

- Heading: Age per Medal – Tableau Book 10pt, black bold

- Heading: Young | Old – Tableau Book 9pt, black

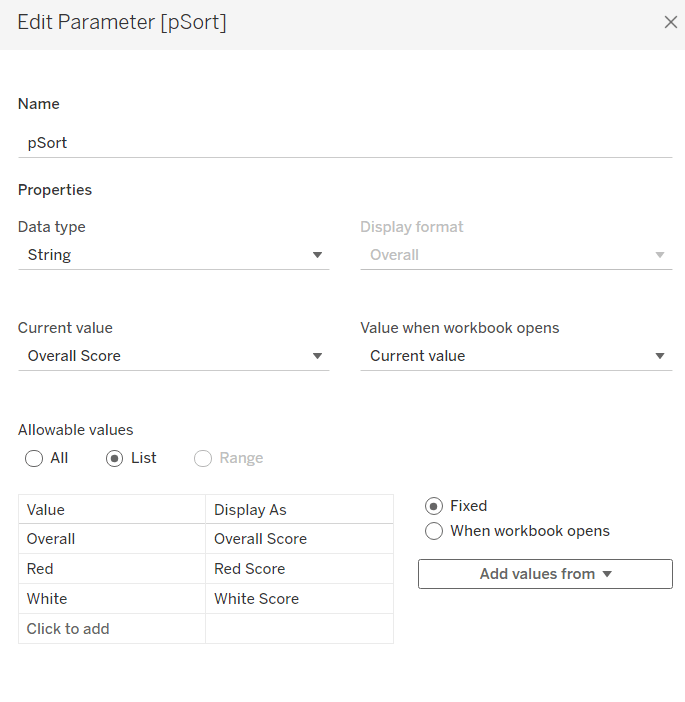

The title needs to reflect the number of medallists above a user defined age. To capture the age we need a parameter

pAge

integer parameter defaulted to 42

Then we can create

Total Athletes at Age+

{FIXED:COUNTD( IF [Age]>= [pAge] THEN [Name] END)}

Add this to the Detail shelf, then update the title of the viz to reference this field. Note the spacing to incorporate the positioning of the parameter later.

The chart also needs to show background banding based on the selected age. Add a reference line to the Age axis (right click axis > add reference line), which references the pAge parameter, and fills above the point with a grey colour

Ultimately this viz is going to be broken up into separate rows, but we need the Age axis to cover the same range. To ensure this happens, we need

Ref Line – Oldest Age

{FIXED: MAX([Oldest Age Per Sport])}

Add this to the Detail shelf, then add a reference line which references this field, but doesn’t actually ‘display’ anything (it’s invisible).

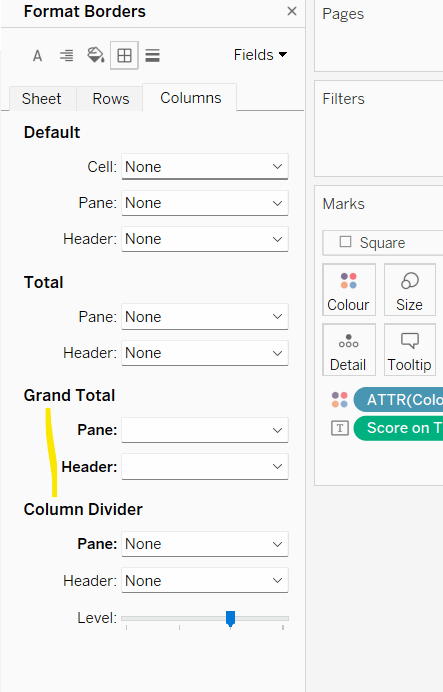

Tidy up the visual – remove gridlines, zero lines, row & column dividers. Hide the y-axis (uncheck show header). Remove the x-axis title. Hide the heading label (right click > hide field labels for columns). Hide the null indicator.

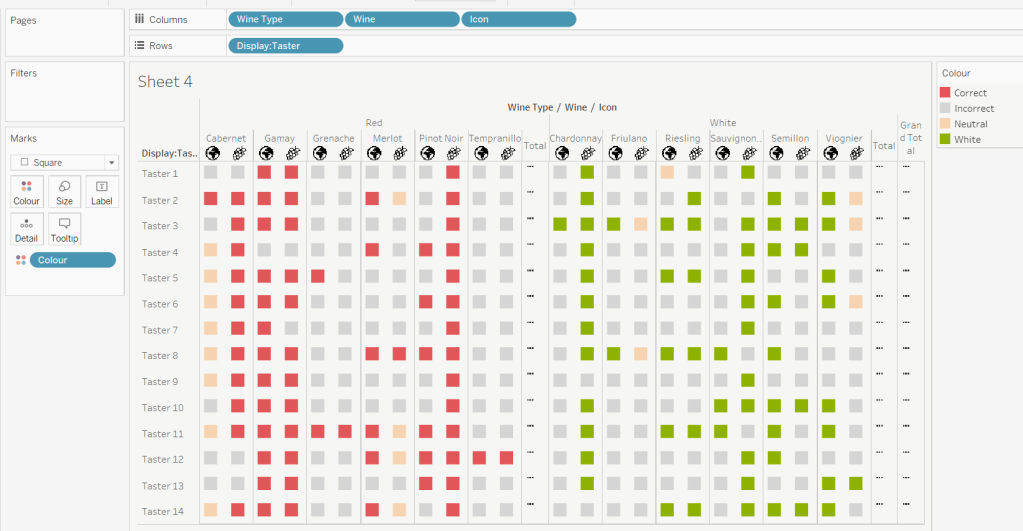

Filtering per row

Add the Row field to the Filter shelf and select the only option displayed (0). Then edit the table calculation and select all the fields to compute by, but set the level to Sport.

Show the Row filter and select the 0 option only. – only the first 5 sports are now displayed.

Name this sheet Row1. Then duplicate the sheet, set the Row filter to 1 and name the sheet Row2. Repeat so you have 7 sheets.

Building the dashboard

Use vertical containers to help position each of the 7 rows on the dashboard. For 6 of the sheets, the title needs to be hidden. I used a vertical container nested within another vertical container to contain the 6 sheets with no title. I then fixed the height of this ‘nested’ container and ‘distributed” the contents evenly. But I did have to do some maths based on the space the other objects (title & footer) used up on my dashboard, to work out what the height should be.

You’ll then need to float the pAge parameter onto your layout, and may find that after publishing to Tableau Public, you’ll need to edit online to tweak the positioning further.

My published viz is here.

Happy vizzin’!

Donna